The challenge of choice

Dozens of platforms now claim to bring AI into QA, but not all are built for your environment. The right tool complements your existing automation, connects to your CI/CD system, and delivers value without disrupting how your engineers already work.

Evaluation checklist

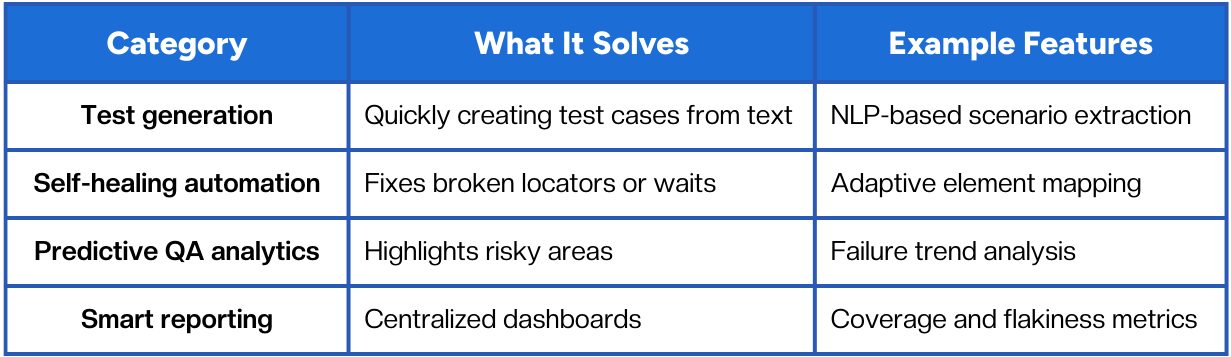

When comparing options, focus on these dimensions:

- Compatibility: Works with your frameworks (Playwright, Cypress, Selenium, etc.).

- Integration: Connects to GitHub Actions, Jenkins, or other CI/CD pipelines.

- AI maturity: Goes beyond “auto-generate tests” to include prioritization or self-healing.

- Reporting: Provides clear dashboards for dev, QA, and product leads.

- Maintenance impact: Reduces upkeep, not adds to it.

A tool that checks four out of five boxes is likely a fit; one that demands a full rebuild of your stack probably isn’t.

Adoption strategy

- Start with a problem, not a feature. Identify pain points such as flaky tests or long regression cycles.

- Run a contained pilot. Integrate the new tool into one project and monitor changes in failure rate and cycle time.

- Measure and share. Track improvements in test stability and team velocity; use that data to guide expansion.

- Train the team. AI insights are only valuable when engineers trust and understand them.

Over time, combine complementary tools, for example, one for analytics and another for self-healing, to build a flexible, evolving stack.

Typical categories of AI testing tools

Conclusion

AI testing tools are most effective when they strengthen, not replace, your QA foundation. The right combination of frameworks and intelligence can eliminate bottlenecks, provide visibility, and give your releases the confidence they need.

Begin by tackling one concrete problem, flaky tests, maintenance cost, or release delays, and let data guide your next steps.

Fast and reliable test automation

.png)